If you have been living in chatbots for the past two years, Physical AI is the rude awakening: intelligence that has to deal with gravity, friction, clutter, and people who wander into the scene at exactly the wrong moment. In plain terms, it is AI that can sense the real world and take physical actions in it, not just produce text or images.

That sounds obvious until you remember how often AI still means a model that never leaves a server. Physical AI has a body somewhere, even if that body is a robot arm, a warehouse vehicle, or a camera network that triggers actions outside the screen.

What is Physical AI

Here is the clean definition: AI that operates in and interacts with the physical world, typically by combining models with sensors and actuators so the system can perceive, decide, act, and learn from results.

IBM describes physical AI as models used in physical space by pairing AI with sensors, actuators, and control systems. NVIDIA frames it as autonomous systems, from robots to self-driving cars, that can perceive, reason, and perform complex actions in the physical world.

Researchers often use sibling terms. A 2025 survey explains embodied AI as agents that must handle the unpredictability of the physical world rather than only solving abstract tasks in software. Another survey points out that embodied intelligence depends on tight coupling among control, body, and environment.

So when people loosely say physical technology or physical intelligence, what they usually mean is this: the model is not the whole product. The model sits inside a loop with sensors, motors, safety limits, and a lot of engineering tradeoffs.

How Physical AI Works in the Real World

You can think of physical artificial intelligence as a control loop that runs many times per second. The hardware differs by application, but the pattern is stable:

First, sensors observe the world. That can be cameras, depth sensors, lidar, force sensors, joint encoders, microphones, or temperature probes, depending on the job.

Second, the system estimates what is happening. In robotics, that often means locating itself, estimating where objects are, and tracking change from frame to frame. Modern work increasingly leans on large multimodal models to interpret scenes and human instructions.

Third, it chooses an action. Sometimes that is classic planning plus control. Sometimes it is a learned policy from reinforcement learning or imitation learning. In practice, many teams mix the two because each side fails in different ways.

Fourth, it acts through motors or other actuators, measures what happened, and corrects. In physical settings, small errors compound quickly, so feedback is not optional, it is the job.

If you are wondering how does physical AI work, what this really means is that you ship a loop, not a model. Good physical AI systems treat perception, decision making, and control as one unit with clear failure points, not a stack of disconnected demos.

How Physical AI Gets Trained

Training is where the romance dies and the work begins. Real-world data is slow to gather, costly, and sometimes risky. That is why simulation and synthetic data keep showing up in serious discussions of physical AI systems.

One classic headache is the reality gap: a policy that looks great in simulation can fall apart on real hardware. Researchers use methods like domain randomization, which trains on wide simulated variation so the real world looks like just another variation.

The 2017 paper by Josh Tobin and coauthors is a widely cited starting point. A later survey on sim to real transfer in deep reinforcement learning reviews families of techniques, including randomizing sensor and physics, system identification, and tuning on real data.

Many teams also rely on learning from demonstration, where a person teleoperates or guides a robot and the system learns from those examples. Survey work on imitation learning and demonstration learning explains why this is often safer and faster than trial-and-error learning on real machines, especially when failure can break hardware or hurt someone.

A newer thread is world models: models that try to learn environment structure so agents can plan using predicted futures. This idea shows up both in research surveys and in industry investment.

A February 18, 2026 Reuters report describes spatial intelligence as reasoning about how the 3D world works, and ties that direction to World Labs founded by Fei-Fei Li. A 2025 survey of world models for embodied AI notes that the term covers multiple roles, from predicting future states to acting as environment simulators for training agents.

Physical AI vs Generative AI

The phrase physical AI vs generative AI is not a cage match. It is a scope question.

Generative AI is mainly about producing content: text, images, audio, code. It can be wildly useful, and it can also be blissfully unconcerned with whether the output will crash into a wall.

Physical AI is about producing actions in a world that bites back. Models have to respect timing, safety, and physics. That changes what good looks like. A robot that is 95% correct but occasionally grips too hard is not charming, it is a safety investigation waiting to happen.

Where they overlap is the brain layer. Large multimodal models can help interpret instructions and scenes, and they can sit above lower-level motor skills that handle grasping, walking, or navigation. Google DeepMind describes RT-2 as a vision-language-action model trained on both web data and robotics data so it can translate language and images into robot actions.

Where it is being used right now

Let’s break it down with physical AI use cases you can point to, not hand-wave.

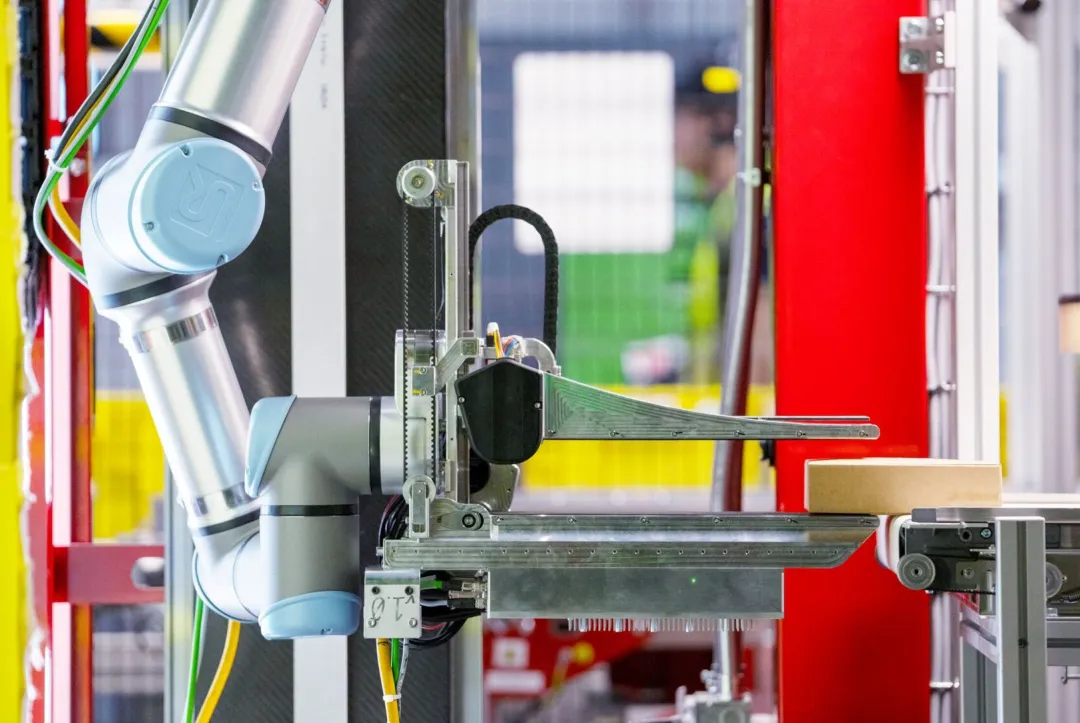

First, physical AI in robotics is showing up in warehouses. Amazon introduced a robot called Vulcan that uses tactile sensing and force feedback.

In a 2025 statement quoted by The Verge, Amazon’s applied science director Aaron Parness said:

Vulcan represents a fundamental leap forward in robotics.

The same report says Amazon claims Vulcan can handle about 75% of items in its warehouse inventory and that it has been operating in Spokane and Hamburg, processing half a million orders at the time of reporting.

Second, in logistics, trailer unloading is finally becoming practical for machines. DHL announced a first commercial deployment of the Stretch robot in 2023 for unloading trailers and containers, turning a back-breaking task into something handled by equipment while warehouse staff focus on other work.

Third, physical AI in manufacturing is often less about humanoids and more about eyes. The BMW Group says it has used AI in series production since 2018, including image recognition that compares component images in milliseconds to large sets of reference images to spot deviations.

For a market-scale reality check, the International Federation of Robotics reported 4,281,585 industrial robots operating in factories worldwide in 2023, up 10%, with annual installations above half a million units for the third consecutive year. Those robots are still mostly in structured factory settings. The bet behind Physical AI is that more of them will handle variable tasks in less controlled environments.

Risks, Limits, and What to Watch Next

Here’s the thing: once software meets steel, mistakes leave a mark. Researchers have started treating physical risk control as its own discipline, because robotics that draws on foundation models is expected to move from closed environments into open ones where humans and robots share space.

The hardest limits of Physical AI are not philosophical, they are practical. Data is scarce for rare events like slips, dropped objects, or near misses. Simulation helps, but the reality gap is stubborn, which is why sim to real methods and careful transfer are still active research areas.

If I had to bet on what moves the field over the next few years, it is not a single model. It is better environment modeling, better touch sensing, and better evaluation, so teams can measure task success and safety instead of relying on shiny demos. Public funding signals suggest investors agree that 3D understanding is central to the next wave.

Frequently Asked Questions

1. What is Physical AI?

Physical AI refers to artificial intelligence systems that perceive, reason, and act in the physical world through machines such as robots, drones, and autonomous devices.

2. How is Physical AI different from traditional AI software?

Unlike software-only AI, Physical AI interacts with real-world environments using sensors, actuators, and embedded hardware.

3. What technologies power Physical AI systems?

Physical AI relies on machine learning, computer vision, sensor fusion, robotics, and real-time control systems.

4. Where is Physical AI used today?

It is commonly used in robotics, autonomous vehicles, smart manufacturing, healthcare devices, and warehouse automation.

5. What are the biggest challenges in Physical AI development?

The main challenges include real-world unpredictability, safety risks, high hardware costs, and the need for real-time decision-making.

The Tech Junction is the ultimate hub for all things technology. Whether you’re a tech enthusiast or simply curious about the ever-evolving world of technology, this is your go-to portal.